On this page

Plans behave differently once real traffic and real money flow through the system. A MACH stack becomes less about diagrams and more about elapsed time, partial failure, vendor behavior that changes between releases, and whether operators can explain slow or failed customer journeys end to end.

MACH stands for Microservices, API-first, Cloud-native, and Headless. It trades a single coordinated release train for independent lifecycles. It does not remove incidents. It changes where risk appears and how far a failure can propagate.

This article is for teams moving from pilot to always-on operations. It pairs with an adoption playbook by stressing operating constraints: how integrations behave under pressure, what contract-first work requires in practice, and failure patterns that recur in composable estates. For framing and sequencing, see MACH Architecture and the roadmap article in related reading.

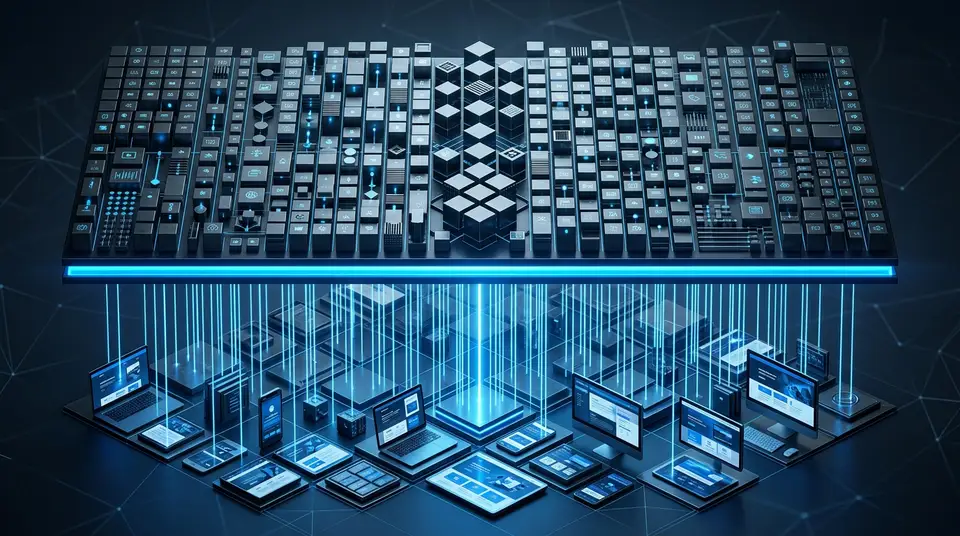

The production shape of a MACH system

In production, MACH usually reads as a dependency graph. Storefronts and apps sit at the edge. Behind them you often find a BFF or orchestration tier, domain capabilities (pricing, promotions, cart, payments, search, content), shared platform services (identity, observability, feature flags), and SaaS boundaries with their own status pages and calendars.

That graph is an asset and a cost. Asset: you can scale, swap, or rewrite a node without rewiring every neighbor. Cost: Tail latency and failure compound along paths. A request that was one hop inside a monolith becomes a set of coordinated calls. Each synchronous hop adds variance; each dependency adds an outage class.

The first production skill is often explicit integration design. You decide what stays synchronous, what must not block checkout, what can degrade with clear customer messaging, and where you stop assuming strong consistency spans every vendor.

Sync versus async: pick the integration shape on purpose

Use the table below to sanity-check default choices. It is not a strict rule set; it helps catch accidental coupling before revenue traffic does.

| If the requirement is… | A practical default | Why it matters in MACH |

|---|---|---|

| Immediate user feedback (cart totals, payment authorization) | Short synchronous paths with hard bounds | Long chains explode tail latency and blend failure modes. |

| Eventual alignment (search indexes, recommendations, analytics) | Events, queues, outbox-style handoff | You absorb slower peers without holding the browser open; you pay in ordering and duplication literacy. |

| Money or inventory truth | Idempotent commands plus explicit recovery stories | At-least-once is the common ground; “exactly once” is usually careful design, not a wire guarantee. |

Keep synchronous paths shallow

HTTP (or gRPC) is easy to reason about and easy to chain by accident. For revenue paths, count critical-path hops, meaning calls that must succeed before you can truthfully tell a customer the action is complete. More than a small handful of synchronous boundaries usually means you accepted latency and fragility that will surface under load.

Guardrails worth enforcing:

- Timeouts everywhere. If your service does not choose one, the network will, often at the worst time.

- Retries with intent. Retries amplify load. Use jitter, cap attempts, and match retry policy to idempotency (see below).

- Bulkheads. Isolate connection pools, threads, and queues so one chatty integration cannot starve a shared front door.

Make asynchronous paths operable

Events and queues keep the experience responsive when downstreams stall or disappear. They charge rent in ordering, duplication, replay, backpressure, and operations questions such as why queue depth is climbing.

Invest early in idempotent consumers and observable dead-letter behavior. A costly failure mode is poison messages that fail quietly and surface first as unexplained staleness in downstream systems.

The BFF as composition layer, not a second monolith

A BFF trims client complexity and hides vendor quirks. It can also grow into a micro-monolith where many unrelated deadlines land in one artifact. Aim for narrow scope: composition, response shaping, auth translation, and policy that belongs at your edge, not domain logic that belongs in an owned service.

What API-first means when money moves

“Design APIs before implementation” is easy to say. In production the sharper bar is contract-first operations, where two internal teams, or you and a vendor, can evolve without surprising each other.

What belongs in a contract besides a JSON schema

Treat these as published facts, not informal memory:

- Operations supported and an error taxonomy (what is retryable, what is not)

- Versioning and deprecation windows that respect integrators

- Performance envelopes (expected latency bands, payload norms)

- Auth model (scopes, token lifetimes, rotation expectations)

- Change policy: additive versus breaking, notice channels, and escalation paths

When those stay implicit, teams assume alignment that breaks the first time a producer ships a non-obvious change.

Versioning that teams can follow

There is no single winning scheme (URL segments, headers, negotiation). There is a common failure mode: several informal versions in the wild because one mobile client never upgraded.

Make the rules straightforward:

- Prefer additive evolution.

- Publish deprecation clocks in months, with named owners.

- Wire consumer checks into CI for critical paths (contract tests, schema compatibility gates, replay against recorded fixtures).

Idempotency and the “exactly once” mirage

Distributed mechanics are usually at-least-once. “Exactly once” is most often a careful mix of idempotent handlers and deduplication keys, not something the network promises you.

For payments, holds, fulfillment messages, and subscription changes, define an idempotency key story before launch. Incidents frequently trace to a retry that executed the side effect twice because operators treated HTTP 500 as “no change occurred.”

Failure modes that arrive first

Composable estates multiply surfaces. These patterns recur because the graph rewards optimism until traffic disagrees.

| Pattern | What it looks like | What usually helps |

|---|---|---|

| Critical-path latency stack | CPU and databases look fine; shoppers time out. | End-to-end tracing, fewer synchronous hops, honest caching with aligned TTLs, graceful degradation (for example last-known-good pricing with a notice). |

| Retry storms and herds | Transient errors spark retries that align; dependency dashboards look like self-inflicted overload. | Exponential backoff with jitter, per-dependency circuit breaking, capped retry budgets, hedging only where you own the cost trade-off. |

| Partial outcomes across services | Cart updated, payment taken, ancillary profile write failed; you need recovery, not a long theory session about distributed transactions. | Disciplined Sagas or compensating steps, human-safe runbooks, UX that never claims success before it is true (“Payment received” only when it is). |

| Semantic drift | The field availability meant “sellable online” in one year and “available-to-promise across DCs” later; storefront behavior shows ontology rot without a single coordinated code deploy. | Schema registries, domain language in API docs, scheduled contract reviews tied to roadmap work. |

| Boundary blind spots | Rich dashboards on owned services; vendor pain appears as unexplained 503 until the status page updates. | Dependency maps with owners, synthetic checks on critical journeys, error budgets per material dependency. |

| Secrets spread | Many integrations mean many credential lifetimes; a rotated signing key misses one service for a week. | Centralized secrets, rotation drills, least-privilege scopes, tight discipline on partner-facing APIs. |

Observability that names the hop

If you cannot answer which hop, you cannot run MACH responsibly.

A minimum useful baseline:

- Correlation identifiers through your services (and from vendors where they cooperate)

- Structured logs with stable fields (tenant, journey, cart id, within privacy policy)

- Traces from BFF through domain services to vendor calls

- SLIs and SLOs anchored to shopper-visible outcomes (checkout success, search latency), not only pod CPU

Bias alerts toward symptoms (checkout success dropped) with drill-down to causes. If alerts are mostly arbitrary infrastructure thresholds, you will chase ghosts while revenue leaks.

Cloud-native rigor as shared baseline

Cloud-native in production is less about where machines run than about repeatable discipline: immutable artifacts, audited promotion between environments, infrastructure-as-code, restores, failovers, and game days. Critical APIs that cross teams should share a platform baseline (network policy, certificates, gateway configuration). Otherwise each team reinvents a partial control plane.

Capacity work should be dependency-aware: burst scenarios (launches, campaigns, payment retries, flash inventory) not only steady read load.

Headless content and the edges people forget

Headless fits MACH when channels need independent lifecycles. Production surprises cluster around preview, personalization, cache invalidation, and stale CDN behavior. An editorial change can be “live” while edge caches serve another version for minutes when purge semantics drift.

Treat cache keys, purge contracts, and preview authentication as part of the CMS integration with the same rigor you apply to commerce APIs.

A maturity ladder you can audit

Use the steps below as a review checklist with engineering and product leadership.

- Measured. Traces and logs let you name the slow hop on a critical journey.

- Bounded. Timeouts, retries, and bulkheads stop one bad neighbor from taking your edge offline.

- Contracted. Public interface changes are versioned, tested, and announced with migration time.

- Recoverable. Idempotency keys and compensations exist on paths that move money or inventory.

- Understandable. Incident reviews speak in business outcomes, not only pod names.

Summary

MACH Architecture distributes autonomy across services and vendors. Production distributes accountability along the same edges. Every integration is a promise. Promises need owners, tests, telemetry, and maintenance cycles. If you fund contracts and observability with the same seriousness as procurement, failures tend to be smaller, explainable, and recoverable instead of unclear escalations.

For earlier-stage sequencing and playbook-level guidance, use the adoption article and pillar pieces in related reading; they are the front half of the story this runtime view assumes you are already living.